Why Digital Sovereignty in Europe Is at Risk | Interview with Tuta

Explore how Europe’s digital sovereignty is undermined by Big Tech, encryption backdoors, and chat control. Insights from Tuta’s Hanna Bozakov on...

Explore how Europe’s digital sovereignty is undermined by Big Tech, encryption backdoors, and chat control. Insights from Tuta’s Hanna Bozakov on...

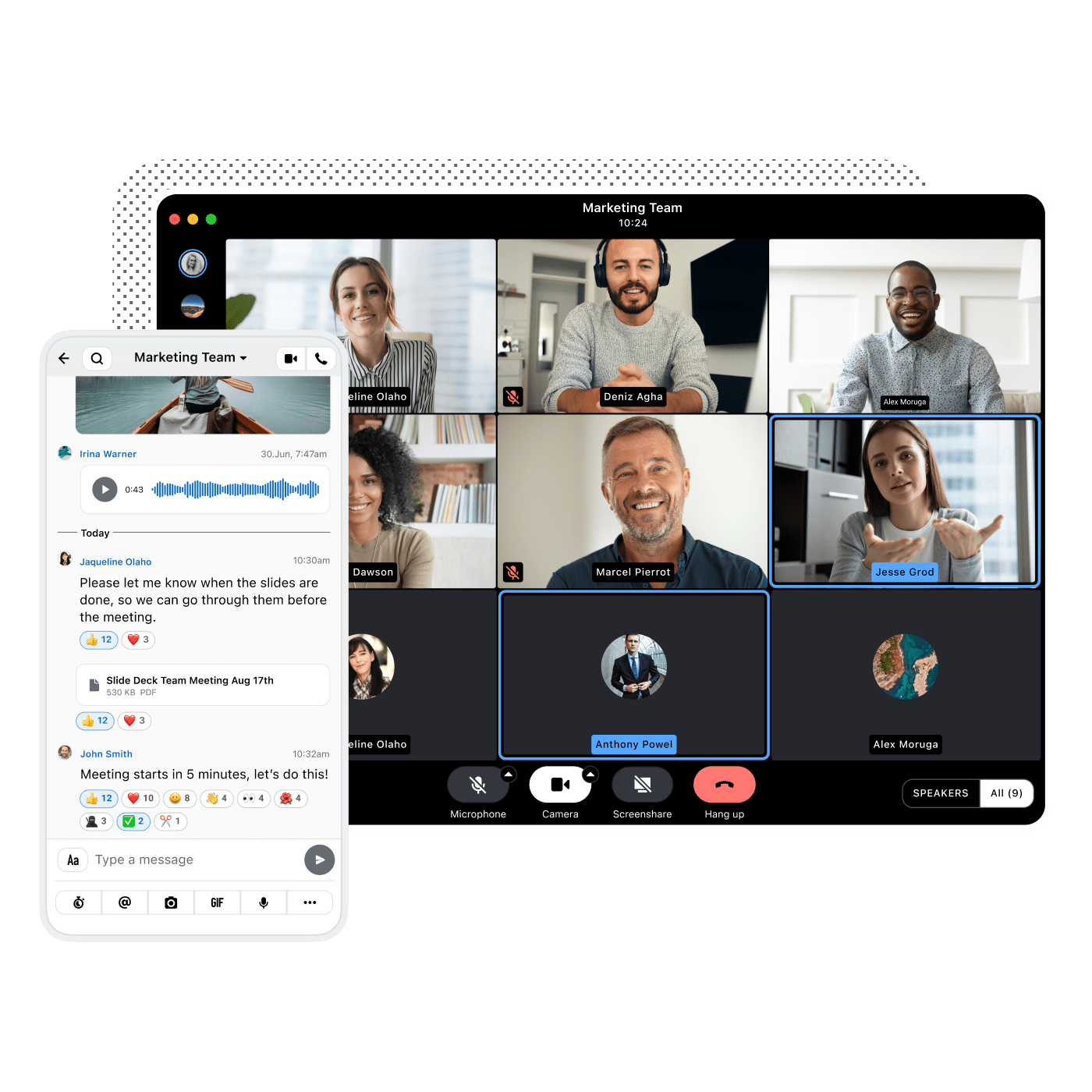

Despite prioritizing encryption and EU data hosting, European organizations still rely on US platforms like Microsoft Teams. Explore the four key...

Despite strong regulation, only 16% of tech and policy leaders believe Europe will achieve digital sovereignty. Discover what’s holding organizations...

In a cyberattack, your primary networks may go dark. Learn why fallback communication channels are essential for crisis continuity, resilience and...

Explore how NIS2 regulations are reshaping crisis communication and why end-to-end encryption, traceability, and fallback channels are now essential...

Wire and EBCONT have joined forces to deliver secure, sovereign communication for public institutions and critical infrastructure in Europe. Learn...

Matrix talks openness but sells closed-source security. Wire exposes the contradictions in Matrix’s model and makes the case for true transparency,...

Looking for a European alternative to replace Microsoft or Google? Don't look further! Read our blog and find safe and secure solutions to 6 key...

US surveillance laws override EU privacy rules, why Microsoft and other tech giants can't keep their promises on data sovereignty, and what the EU...